If you haven’t read the short story, ‘There Will Come Soft Rains,’ do so now. (At the very least, it will help you understand the rest of this article.) It was first published 69 years ago in Collier’s magazine, but don’t pass it by as a historical curiosity. At a surface level [spoiler alert], it’s a story about the last days of an abandoned, high-tech house. But really it’s a memento mori and a prescient warning about automation, war, and wealth; and raises questions about how these things might be linked. We are still figuring out our relationship to automation, still grappling with the horrors of war, and suffering the cruelty of wealth inequality, so, it’s still relevant.

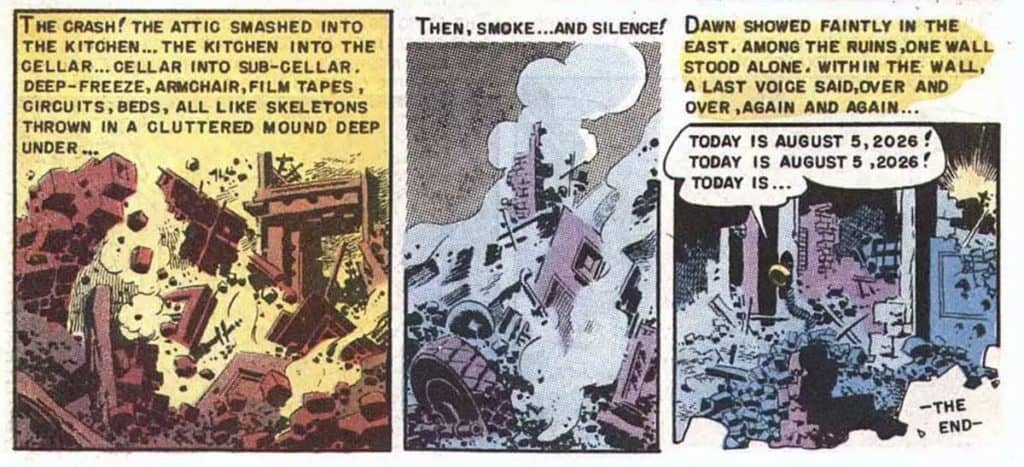

I suspect people will be paying more attention to it in the near future, since the story’s events happen over the 4th and 5th of August in 2026—only seven years in the future as I sit writing this article. At the moment, the U.S. is “only” at war with Afghanistan, drugs, and reason, and yet most of us would still be surprised if by that date our cities had been reduced to green, glowing piles of rubble with fully-automated houses in the exurbs. Also, all three of the Allendale, Californias are near where I live, so I really hope this stays fiction.

Despite my love for it, I must admit that some aspects of this story ring false for modern readers. Some of the technological paradigms are outdated. Could it be updated to engage and challenge readers once again?

Admittedly, as a writer I’m no Ray Bradbury, but I happen to have done reviews of speculative technology for the past 7 or so years, and am in a good position to provide a critique. I’ve also been practising interaction design for 25 years so am in a good place to make suggestions. Together, the following changes might make the story more internally consistent and bring it in to line with the modern state of tech. So, let’s imagine that we were going to pen a speculative update of the story. What follows are thoughts for that update.

Note that I’m not merely interested in the design of a smart home. That’s a fine goal per se, but here I want to make improvements to the story’s speculative technology in a way that keeps the essence of the story—and its themes—intact.

Let’s begin by dispensing with some small recommendations about the tech. Stuff like:

- If you set the alarm earlier, the prompts won’t need to be so urgent, like the haranguing, “off to school, off to work, run, run, eight-one!”

- Maybe have the house re-route uneaten food to a compost rather than just dumping it directly into the sea. (Were the 1950s that lackadaisical about the environment?)

- Don’t have the sprinklers running while it’s raining. That’s a waste of water.

- Have the garage door open only if someone is actually in the car. We’ll talk more about security in a bit.

- Or, hey, if you can automate breakfast, how about automatic bill payment? Or automating feeding of the dog? I understand that the internet wasn’t available in the mid-century this was written, but as long as you’re imagining narrow-AI lectors in the study…

- Replace references to “film tapes” and “attic brains” and the like with modern equivalents like “on-prem data backups” and “computer.”

- It seems a mismatch that the man’s silhouette was of him mowing a lawn, when it later mentions that the house has “remote control” lawn mowers. Perhaps omit reference to the latter, or have the man doing something else. He owns Picassos, after all, and may not be the lawn-mowing type.

So, okay. Those quick-hits conveyed, let’s move on to some bigger topics.

Let’s humanize the industrial design

Let’s talk style of the industrial design. I know the point of the story is to illustrate how horrible all this automation is, but I must say the style choices seem over-the-top. Let’s not have the incinerator look like an ancient archdemon. Let’s not have the little mice Roombas seem angry for having to do their jobs. Let’s not design the fire suppression nozzles to look like “blank robot faces.”

Pareidolia is a real phenomenon, and when you invoke a face in your design, users cannot help but respond appropriately. I’d rather omit the faces from the design altogether, but if you must have it, how about making these faces positive? If the story is really going for bleakness, how much worse is a system that looks delightful and usable, but is actually horrifying? Think Hello Kitty murderbots.

Style is also conveyed through the behavior of systems, not just the surfaces. By having the windows snap shut at the mere brush of a bird wing, you’re making the place sterile at best and anxiety-producing at worst. If, narratively, you want the owners to have been germophobes/mysophobics, then indicate in the story somewhere that things are adhering to user preferences. Something like,

“…in the owner’s quirky preoccupation with self-protection, which bordered on paranoia…”

Otherwise, who would have bought the damned thing in the first place?

Let’s use modern authentication

That same paragraph in the story reminds me of a note about authentication. It implies that all that’s needed to enter the house is a spoken password. While that does J.R.R. Tolkien and the Doors of Durin proud, neither the Mines of Moira nor our fictional house are secure from interlopers with single-factor authentication. What if the wrong person gets the password?

Two-factor authentication is the modern standard, requiring something the user knows, and something the user either is or possesses. Face recognition is rightly being vilified in civic infrastructure and police technology at the moment, but I think it’s still okay for speculative dystopias. So, in our revised story, the house should not ask passing foxes for passwords, but instead register the image recognition algorithm’s high confidence that the visitors it has detected are neither people nor the family dog, and so does not ask for authorization.

Let’s prioritize safety scenarios

Let’s talk safety. There are some simple interventions we should include, like adding chimney caps so sparks don’t spread fire along the conveyor belt of convecting air there. Maybe fire resistant sleeves for the wiring so it doesn’t curl up in the fire? I know Bradbury was writing from the era of knob-and-tube wiring, but I’m sure fire marshals would not approve today. Bradbury used the imagery of curling wires to illustrate the destruction of the house’s computer infrastructure, so let’s replace it with the mice sensors curling up and frying from the heat.

Oh, and one note for believability: The lowest melting point of copper-alloys like bronze is around 850°C (1562° F), which is higher than the 593°C measured in a common house fire. So maybe swap out the housing of the speculative “attic brain” for a tin alloy. Or a plastic. We’re lousy with that stuff nowadays. Also, computers are now small enough to fit inside a protective black box, so you’d have to find some way to explain why it’s all exposed to the fire.

While the house is in panic mode, the second thing it should do (after first trying to alert the inhabitants) is to alert service professionals. Sick dog detected and owners nowhere to be found? The house should try to contact the veterinarian. Fire breaking out? The house should try to contact the fire department. Sure, in the story these professionals are also smears of carbon by the time the fire happens, but we show the house trying, so it doesn’t look like it doesn’t know what the internet is.

Quick note: It’s a bad idea to keep flammables above heat sources or electrical appliances. The story wouldn’t be the same without the conflagration, so we need something else to start it. I have an idea how the dog might start it (see below) but the original reasoning only illustrates careless chemical storage rather than a dehumanizing automation.

Let’s add contextual awareness

Why doesn’t the house know the people are missing? Sure, it’s dramatic, but it makes the house look… well… dumb. If it can detect the dog by voiceprint, as it does, and has enough natural language understanding that it can understand arbitrary spoken poem titles, as it does, it should at the very least be able to detect that the occupants have gone silent. Even the nursery seems to recognize the context of the fire, when the VR animals stampede. But the house still thinks there are people there, so continues preparing food and bridge games and cigars?

And this is only with the tech Bradbury describes directly in the text. I’d be surprised if such a house didn’t have sensors to note that bathwater is going unused, doors aren’t being opened and closed, and none of the food is being eaten. (If just by weight of the plates before and after the meal.) The most reasonable inference that the computer should make is that nobody’s home, and to not waste food, water, energy, or cigars.

You might think that thermonuclear war is an edge case scenario, but people being not in the house doesn’t depend on a cataclysm. This could just be envisioned as the vacation scenario, or the family being away for an emergency, like an earthquake. It’s set in California, after all.

Let’s stop thinking in terms of automation

I can see how the whole story seems like a critique of the notion of technology. But let me counter that by suggesting this is really about bad design of technology. It’s designed as automation, and that’s the real problem. Systems built to work with humans should entail humane interactions with those humans. Automation is rarely the right answer.

If we’re going to realize the value of modern technology—to have it do work for us—we must find something between the states of manual and automatic. Which leads me to a mode of interaction that I wrote a book about. I have dubbed the mode of interaction “agentive,” to distinguish it from “assistant” technologies. I’d assert that if we rethink the house with agentive concepts, you can still imply it had a good, human-centric design. “Agentive” is not a concept I expect you to know already, but read below for where to find out more about it.

Closer

Thinking in these terms might make for a number of changes in a modernization of the story.

- The alarm can still go off, but a little bit earlier.

- The house can still clean itself. Roombas are a favorite example of agentive tech, and the little mice are like small, swarming versions of that.

- The industrial design should simply acknowledge technology, and not to try to invoke Baal or Moloch directly.

- Make a note somewhere that the house shoos away birds as per the homeowner’s preferences.

- The house should poll its sensors and sensor records for new evidence that its humans have returned.

- Maybe it can automatically compost the ingredients that have spoiled since last check, and send out an order for replacements, along with a note of frustration that the last order was not fulfilled.

- The dog still gets in because it’s authorized, but the house notices the dog’s poor health and attempts to call the vet. Noting the critical situation, the house would override the owner’s preferences—perhaps they preferred to feed the dog themselves—and start to make the pancakes for it.

- It allows the dog access into the kitchen.

- The dog is starving and can’t wait for the food to finish being prepared, so tries to get the food directly off the stove, which starts the fire.

- The house should try to contact the long-gone fire department.

- The house should keep recommending contextual fire safety and escape tips as it burns sadly to the ground. (Yes, even though it can’t detect that people are there—this would be smart in case the sensors were wrong.)

With these speculative changes, the story would tell a sad tale of a system that is well designed, but still going through its routines and doing its best to serve masters who are no longer there, right down to the final moments as it all comes crashing down.

If you want to learn more about the agentive concepts that I’ve mentioned, Rosenfeld Media publishes my book where you can learn more.

Readers interested in my update to Bradbury’s story (based on these critiques) can see it play out once a year on 04 AUG in real-time on the Twitter feed: https://twitter.com/warmwinds2026